Jim Handy, The Memory Guy, posted a short blog about the 3-petaFLOP (peak) SuperMUC supercomputer at the Leibniz Supercomputing Centre on the outskirts of Munich, Germany. (The “MUC” in SuperMUC is the 3-letter code for the Munich airport. Now that’s esoteric!) Based on the Samsung announcement, the SuperMUC’s 147,456 microprocessor cores contained in 18,432 Intel Xeon Sandy Bridge-EP multicore microprocessors are teamed with 324Tbytes of Samsung Green 30nm-class DDR3 SDRAM modules. By Handy’s calculation, that’s a total of 746,496 DRAM chips.

Sounds like a lot, no? Well, Handy’s calculations say that’s about half a day of fab production for one of Samsung’s DRAM fabs. Using an average market price of $10.45 per Gbyte for the past year, Handy then calculates that the value of the DRAMs is perhaps as much as $3.5 million, although with the volume purchase we’re talking about here, it’s likely less.

But the absolute cost of the DRAM is not the point.

The point is that the SuperMUC requires “only” 3.52 MWatts of electricity to run, which Handy pegs at $3 million per year, not including cooling costs. When you include cooling costs, one year’s worth of electricity to power and cool the SuperMUC costs more than the DRAM.

But wait, there’s more.

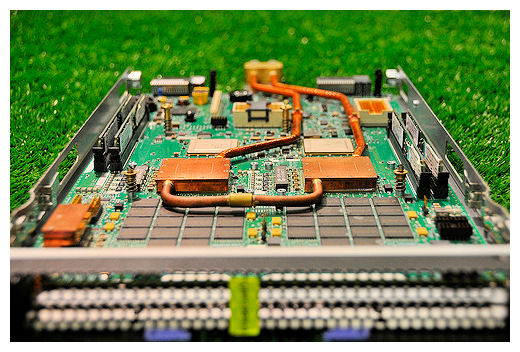

The SuperMUC uses an unusual hot-water cooling system to cool the Xeon processors. It’s a new form of cooling invented by IBM (the system supplier of the SuperMUC) called Aquasar, which reportedly cuts the energy needed for cooling by 40% by using 60°C (or perhaps 45°C depending on the reference) water for cooling. The cooling water is piped through copper cooling blocks directly connected to the processor die. The water flows through microchannels in the copper. Here’s a photo of an Aquasar processor module from Wikipedia:

More Aquasar details here.

You don’t need as much energy to chill water down to 60°C as you do to make it colder. During winter months, waste heat from the SuperMUC will be used to heat buildings.

Pingback: 3D Thursday: Will water cooling for 3D IC assemblies ever be practical? | EDA360 Insider